Facebook’s AI Teams Are Teaching Robots To Perceive The World Through Touch – But Where’s The Focus On Ending AI Racial Bias?

Facebook parent Meta Platforms Inc. is pushing the boundaries of artificial intelligence robots and is reportedly launching touch sensitivity with two new sensors it has created.

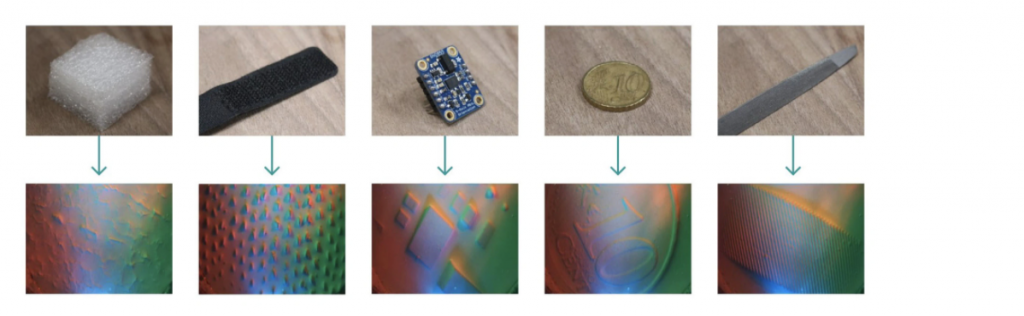

They include a high-resolution robot fingertip sensor called DIGIT and a thin and replaceable robotic “skin,” known as ReSkin.

DIGIT was first released in 2020 as an open-source design; it uses a tiny camera pointed at the pads to produce a detailed image of the touched item.

While Reskin can help AI robots to discern information such as an object’s texture, weight, temperature, and state.

According to Meta AI researchers Roberto Calandra and Mike Lambeta, the project’s idea is to train robots to draw critical information from the things they touch, then integrate this know-how with other information to perform tasks of greater complexity, reported siliconeangle.

This training will be done using ‘Tactile sensing.’ This method aims to replicate human-level touch in robots so that AI can “learn from and use touch on its own as well as in conjunction with other sensing modalities such as vision and audio,” the outlet added.

And to enable AI robots to use and learn from tactile data, they first need to be equipped with sensors that can collect information from the things they touch.

But the bigger question for many people is why there’s such a focus on expanding the abilities of AI robots when they haven’t yet mastered the idea of recognizing darker skin and not confusing Black people with primates.

What’s the problem with the current AI landscape?

Facial recognition technologies, for example, have come under increasing scrutiny because they’ve been shown to detect better white faces than they do the faces of people with darker skin.

They also do a much better job of getting the right men’s faces than those of women. But, unfortunately, mistakes in these systems have been implicated in several false arrests due to mistaken identity.

In 2017, a report claimed that a computer program used by a US court for risk assessment was biased against black prisoners.

The program, Correctional Offender Management Profiling for Alternative Sanctions – Compas – was much more prone to label black defendants as likely to re-offend mistakenly – wrongly flagging them at almost twice the rate as white people (45% to 24%), according to the investigative journalism organization ProPublica.

Compas and programs similar to it were in use in hundreds of courts across the US, potentially informing the decisions of judges and other officials.

And not that long ago, Facebook was again under fire and forced to apologize after an A.I. program mistakenly labeled a video featuring Black men as “about Primates.”

The video, posted by The Daily Mail on June 27, 2020, showed clips of Black men and police officers.

But an automatic prompt asked users if they would like to “keep seeing videos about Primates,” despite the video featuring no connection or content related to primates.

In other words – we can advance AI and technology all we want – but if these gadgets cannot differentiate between ethnic minorities and animals then surely that’s where the attention should be? Diversify the data sets by diversifying the teams working on these projects in order to ensure minimal racial bias instances.

POCIT actually explored this topic earlier this year

Marcel Hedman, the founder of A. I group Nural Research, a company that explores how artificial intelligence is tackling global challenges, described the long-standing issue as a “multi-layered” problem that can “definitely be solved.”

He previously told us: “But I think it’s something that naturally happens when you look at the way that datasets are constructed, and they are constructed based on data compiled from sources that each person chooses.

“So it’s not surprising at all when those preferences are tailored to the preferences of each person, which again represents natural bias.”

Tech entrepreneur Mr. Hedman said he believed the advancement of technology was necessary but urged people to get digital literacy.

“I think we need to ensure that we have a high level of what I’d say is digital literacy, which will basically enable anyone who is receiving data to understand what that data actually means, in context, and where it comes from.

“But the other thing is, and this is from my own standpoint, I believe that it’s super important for us to ask, in which situations should we specifically be using machine learning and AI? And even if we could, we should definitely ask ourselves about where do we want to use and deploy the technology; just because it’s effective doesn’t mean we should use it.”